I try to let big news percolate for a few days before weighing in, and it seems even more appropriate to follow that playbook when it came to the scrum around Marissa Mayer joining Yahoo.

Read More

I try to let big news percolate for a few days before weighing in, and it seems even more appropriate to follow that playbook when it came to the scrum around Marissa Mayer joining Yahoo.

Read More Given the headlines, questions, and legal actions Google has faced recently, many folks, including myself, have been wondering when Google’s CEO Larry Page would take a more public stance in outlining his vision for the company.

Given the headlines, questions, and legal actions Google has faced recently, many folks, including myself, have been wondering when Google’s CEO Larry Page would take a more public stance in outlining his vision for the company.

Well, today marks a shift of sorts, with the publication of a lenthy blog post from Larry titled, quite uninterestingly, 2012 Update from the CEO.

I’ve spent the past two days at Amazon and Microsoft, two Google competitors (and partners), and am just wrapping up a last meeting. I hope to read Page’s post closely and give you some analysis as soon as I can. Meanwhile, a few top line thoughts and points:

Read More Over the past few months I’ve been developing a framework for the book I’ve been working on, and while I’ve been pretty quiet about the work, it’s time to lay it out and get some responses from you, the folks I most trust with keeping me on track.

Over the past few months I’ve been developing a framework for the book I’ve been working on, and while I’ve been pretty quiet about the work, it’s time to lay it out and get some responses from you, the folks I most trust with keeping me on track.

I’ll admit the idea of putting all this out here makes me nervous – I’ve only discussed this with a few dozen folks, and now I’m going public with what I’ll admit is an unbaked cake. Anyone can criticize it now, (or, I suppose, steal it), but then again, I did the very same thing with the core idea in my last book (The Database of Intentions, back in 2003), and that worked out just fine.

So here we go. The original promise of my next book is pretty simple: I’m trying to paint a picture of the kind of digital world we’ll likely live in one generation from now, based on a survey of where we are presently as a digital society. In a way, it’s a continuation and expansion of The Search – the database of intentions has expanded from search to nearly every corner of our world – we now live our lives leveraged over digital platforms and data. So what might that look like thirty years hence?

Read More It took longer than I thought it would, but it’s finally happened. Apple’s admitted that it needs real search to bring it’s tangled app universe to heel, and purchased Chomp, a leading third-party app review and search service.

It took longer than I thought it would, but it’s finally happened. Apple’s admitted that it needs real search to bring it’s tangled app universe to heel, and purchased Chomp, a leading third-party app review and search service.

Nearly two years ago I wrote this piece: Apple Won’t Build a (Web) Search Engine. From it:

…but it will build the equivalent of an app search engine. It’s crazy not to. In fact, it has to. It already has app discovery via the iTunes store, but it’s terrible, with no signal that gives reliable results based on accrued intent.

Read More (image) I don’t have Siri yet – I’m still using my “old” iPhone 4. But I do have my hands on a new (unboxed) Nexus, which has Google Voice Actions on it, and I’m sure at some point I’ll get a iPhone 4GS. So this post isn’t written from experience as much as it’s pure speculation, or as I like to call it, Thinking Out Loud.

(image) I don’t have Siri yet – I’m still using my “old” iPhone 4. But I do have my hands on a new (unboxed) Nexus, which has Google Voice Actions on it, and I’m sure at some point I’ll get a iPhone 4GS. So this post isn’t written from experience as much as it’s pure speculation, or as I like to call it, Thinking Out Loud.

But driving into work yesterday I realized how useful voice search is going to be to me, once I’ve got it installed. Stuck in traffic, I tried searching for alternate routes, and it struck me how much easier it’d be to just say “give me alternate routes.” That got me thinking about all manner of things – many of which are now possible – “Text my wife I’ll be late,” “Email my assistant and ask her to print the files for my 11 am meeting,” “Find me a good liquor store within a mile of here,” (I’ve actually done that one using Siri on my way to a friend’s house last weekend).

I’ve written about this before, of course (see Texting Is Stupid, for one example from over three years ago), and I predicted in 2011 that voice was going to be a game changer. It clearly is, but now my question is this: What’s the business model?

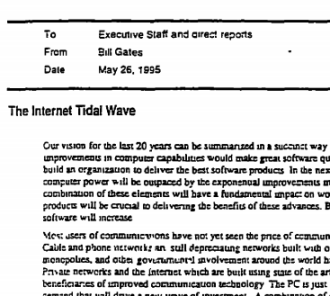

Read More Who remembers the moment, back in 1995, when Bill Gates wrote his famous Internet Tidal Wave Memo? In it he rallied his entire organization to the cause of the Internet, calling the new platform an existential threat/opportunity for Microsoft’s entire business. In the memo Gates wrote:

Who remembers the moment, back in 1995, when Bill Gates wrote his famous Internet Tidal Wave Memo? In it he rallied his entire organization to the cause of the Internet, calling the new platform an existential threat/opportunity for Microsoft’s entire business. In the memo Gates wrote:

“I assign the Internet the highest level of importance. In this memo I want to make clear that our focus on the Internet is crucial to every part of our business. The Internet is the most important single development to come along since the IBM PC was introduced in 1981.”

The memo runs more than 5300 words and includes highly detailed product plans across all of Microsoft. In retrospect, it probably wasn’t a genius move to be so transparent – the memo became public during the US Dept. of Justice action against Microsoft in the late 1990s.

Read MoreGiven that Google+ results are dominating so many SERPs these days, Google is clearly leveraging its power in search to build up Google+. Unless a majority of people start turning SPYW (Search Plus Your World) off, or decide to search in a logged out way, Google has positioned Google+ as a sort of “mini Internet,” a place where you can find results for a large percentage of your queries.(My source is pretty direct about this: “Google has decided that beating Facebook is worth selling their soul.”)

But to my point. An example of samesaid is the search I did this morning for that Hitler video I posted. Here’s a screenshot of my results:

Read More (image) I’ve just been sent an official response from Google to the updated version of my story posted yesterday (Compete To Death, or Cooperate to Compete?). In that story, I reported about 2009 negotiations over incorporation of Facebook data into Google search. I quoted a source familiar with the negotiations on the Facebook side, who told me “Senior executives at Google insisted that for technical reasons all information would need to be public and available to all,” and “The only reason Facebook has a Bing integration and not a Google integration is that Bing agreed to terms for protecting user privacy that Google would not.”

(image) I’ve just been sent an official response from Google to the updated version of my story posted yesterday (Compete To Death, or Cooperate to Compete?). In that story, I reported about 2009 negotiations over incorporation of Facebook data into Google search. I quoted a source familiar with the negotiations on the Facebook side, who told me “Senior executives at Google insisted that for technical reasons all information would need to be public and available to all,” and “The only reason Facebook has a Bing integration and not a Google integration is that Bing agreed to terms for protecting user privacy that Google would not.”

I’ve now had conversations with a source familiar with Google’s side of the story, and to say the company disagrees with how Facebook characterized the negotiations is to put it mildly. I’ve also spoken to my Facebook source, who has clarified some nuance as well. To get started, here’s the official, on the record statement, from Rachel Whetstone, SVP Global Communications and Public Affairs:

“We want to set the record straight. In 2009, we were negotiating with Facebook over access to its data, as has been reported. To claim that the we couldn’t reach an agreement because Google wanted to make private data publicly available is simply untrue.”

Read More Ten years ago, Google’s first Zeitgeist inspired my first book (The Search). Here are some highlights from the tenth annual edition. Props to Google for going deeper than most year end lists with its data. Now…open source the damn data, folks!!!

Ten years ago, Google’s first Zeitgeist inspired my first book (The Search). Here are some highlights from the tenth annual edition. Props to Google for going deeper than most year end lists with its data. Now…open source the damn data, folks!!!

He ran me through Recorded Future’s technology and business model, and I found it impressive. In fact, I’m hoping I can employ it somehow into my book research. And that conditional tense of “hoping” is the main problem I have with Ahlberg’s creation – it’s a rather complicated system to use. Then again, what of worth isn’t, I suppose?

Recorded Future is, at its core, a semantic search engine that consumes tens of thousands of structured information feeds as its “crawl.” It then parses this corpus for several core assets: Entities, Events, and Time (or Dates). Recorded Future’s algorithms are particularly adept at identifying and isolating these items, then correlating them at scale. If that sounds simple, it ain’t.

Read More